Your weekend reading from Web Directions

This week, something new–I’ve split out my introductory essay pulling on the threads of this week’s reading from the articles themselves. So you can read either, or both!

In other news…

Introducing Noops

Long time Web Directions speaker and friend Mark Pesce and I have sorted something new, Noops. Part research, part advisory, part punk, part guerilla. We’ll surface what we see happening in real time.

Join up for free today

More AI Engineer Speakers announced

Shaping up as an incredible, timely program, we keep announcing speakers for an event you genuinely will not want to miss. This will sell out. Early bird closes March 31st.

AI & DEVELOPER PRODUCTIVITY

So where are all the AI apps?

AI, Economics, Software Engineering

Fans of vibecoding and agentic tools say they are 2x as productive, 10x as productive – maybe 100x as productive! Someone built an entire web browser from scratch. Amazing!

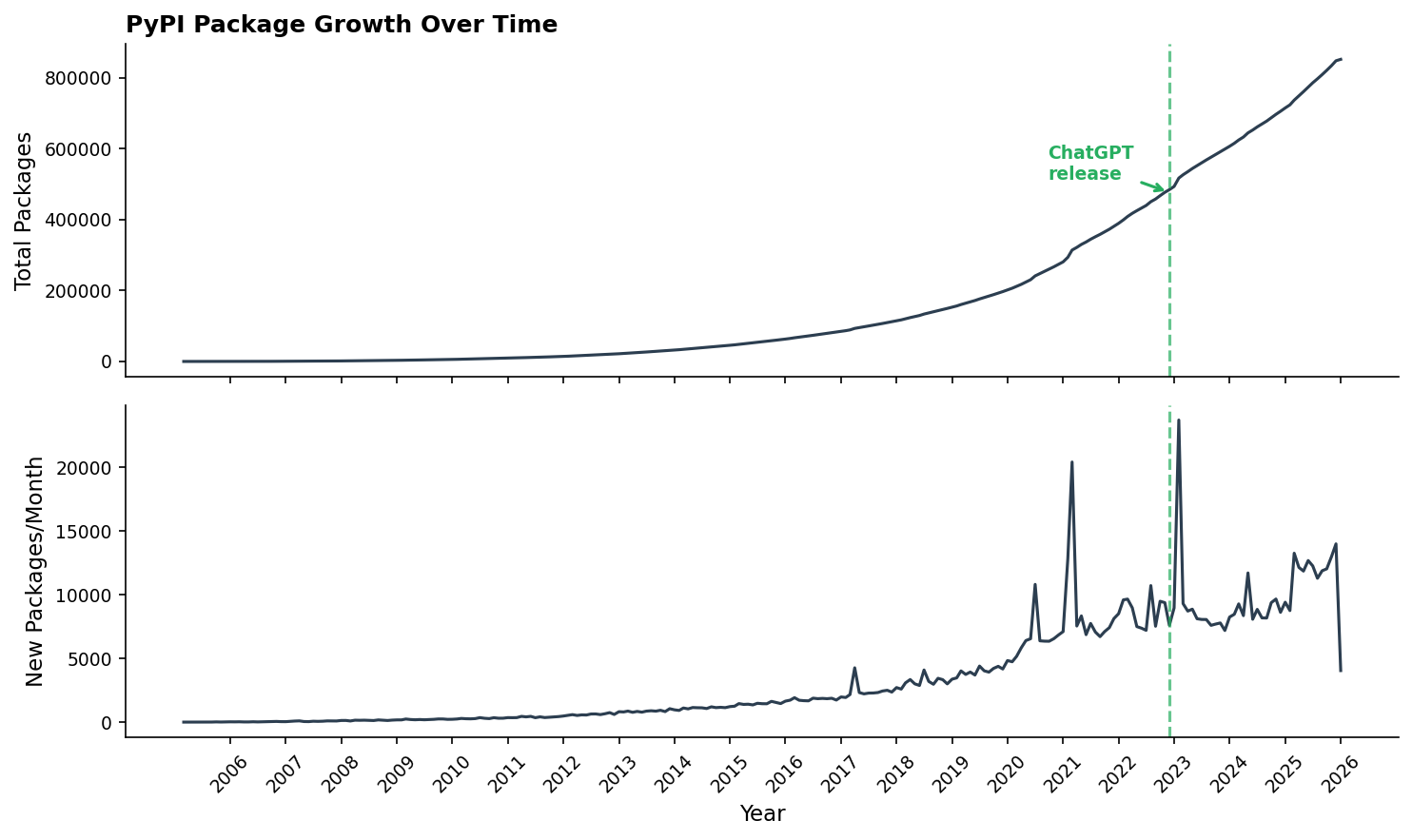

So, skeptics reasonably ask, where are all the apps? If AI users are becoming (let’s be conservative) merely 2x more productive, then where do we look to see 2x more software being produced? Such questions all start from the assumption that the world wants more software, so that if software has gotten cheaper to make then people will make more of it. So if you agree with that assumption, then where is the new software surplus, what we might call the “AI effect”?

Source: So where are all the AI apps?, Answer.AI

Anecdotally, many developers are reporting significant improvements in their productivity. So it’s fair to ask: where is all the software they are developing? The folks from Answer.AI examined the Python ecosystem—not a bad place to look for signals given Python’s intimate connection to AI and software development. In short, they find no particularly strong signals of an uptick in developer productivity.

Software Bonkers

I’m software bonkers: I can’t stop thinking about software. And I can’t stop building software.

My first Claude Code project was to rebuild Twitter as I always thought it should be:

Surprise! It’s really lovely. And members from my membership program have used it this past year to form a community the likes of Ye Internet of Yore. We share nice, inspiring things, and are nice and inspiring to one another.

Source: Software Bonkers, Craig Mod

We recently posted a piece from Answer.AI asking where all the AI-developed software is if software developers are now many times more productive. They analyzed the Python ecosystem and found at best three weak signals for a developer productivity increase. Perhaps they weren’t looking in the right place. In Craig’s experience, this new software isn’t necessarily on GitHub, npm, or PyPI—it’s running on local hosts, private cloud instances. There are a dozen or more applications running internal systems for conferences, exploring ideas with IoT, and more.

The Middle Loop

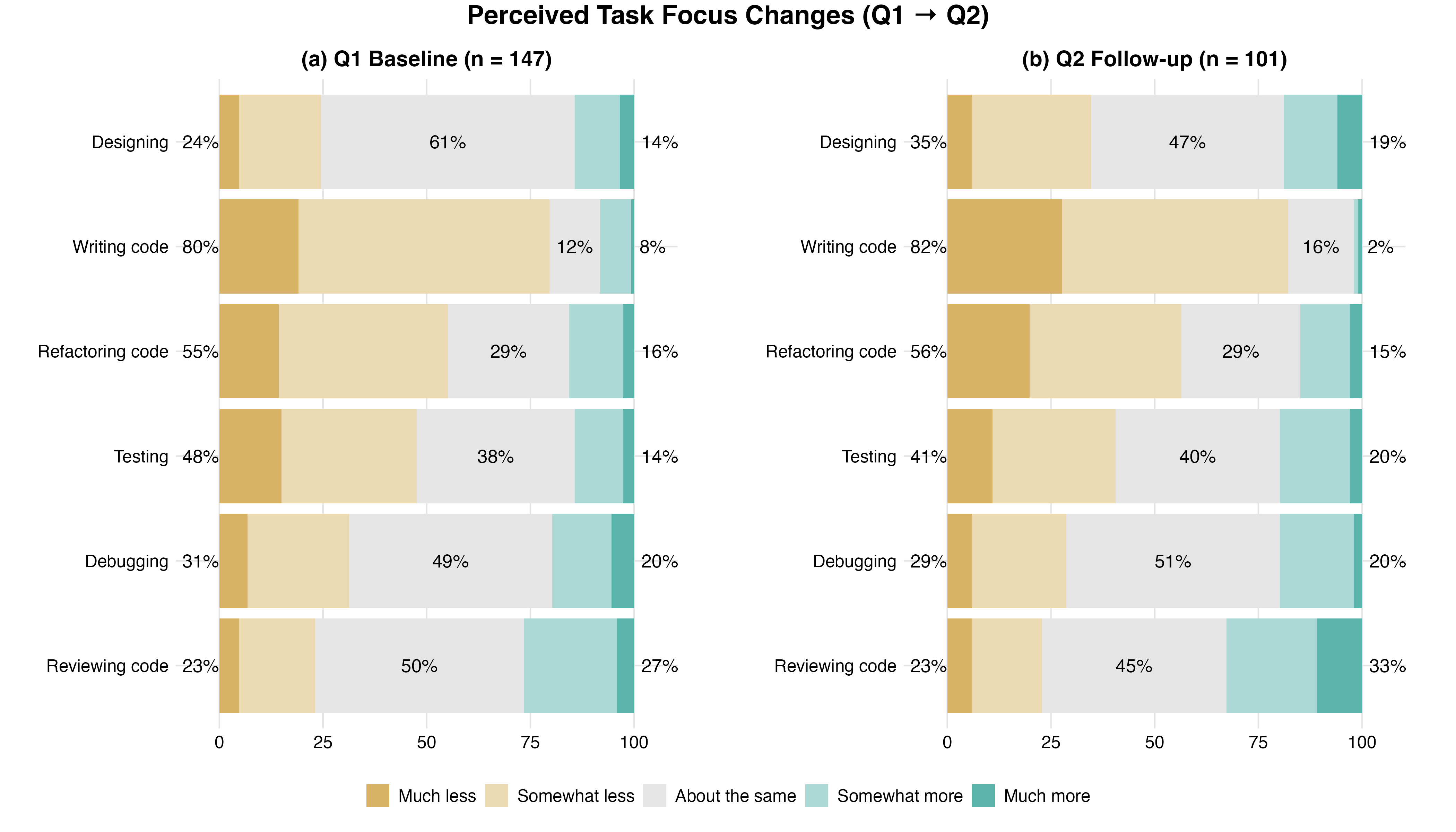

That’s why the first research question I wanted to answer as part of my Masters of Engineering at the University of Auckland, supervised by Kelly Blincoe, was about task focus. Are AI tools shifting where engineers actually spend their time and effort? Because if they are, they’re implicitly shifting what skills we practice and, ultimately, the definition of the role itself.

Ok so engineers are spending less time writing code, no surprise there. But the standard assumption, that freed-up time flows upstream into design and architecture, didn’t hold. Time compressed across almost all six tasks, including design. Rather than trading writing time for design time, engineers reported spending less time on nearly everything.

Source: The Middle Loop, Annie Vella

Annie Vella, conducting research into developer workflows as part of a Master’s program, has been tracking how agentic software development tools are impacting the practice of software engineering.

CODE VERIFICATION & QUALITY

Google Engineers Launch ‘Sashiko’ For Agentic AI Code Review Of The Linux Kernel

AI, AI Engineering, Code Review

Google engineers have been spending the past number of months developing Sashiko as an agentic AI code review system for the Linux kernel. It’s now open-source and publicly available and will continue to do upstream Linux kernel code review thanks to funding from Google.

Roman Gushchin of Google’s Linux kernel team announced yesterday as this new agentic AI code review system. They have been using it internally at Google for some time to uncover issues and it’s now publicly available and covering all submissions to the Linux kernel mailing list. Roman reports that Sashiko was able to find around 53% of bugs based on an unfiltered set of 1,000 recent upstream Linux kernel issues with “Fixes: “

“In my measurement, Sashiko was able to find 53% of bugs based on a completely unfiltered set of 1000 recent upstream issues based on “Fixes:” tags (using Gemini 3.1 Pro). Some might say that 53% is not that impressive, but 100% of these issues were missed by human reviewers.”

Source: Google Engineers Launch ‘Sashiko’ For Agentic AI Code Review Of The Linux Kernel, Phoronix

One frequent observation about software engineering and AI is that writing code has traditionally not been the bottleneck in software production—it’s only one small part of the responsibilities of software engineers. Verifying correctness, quality assurance, and debugging are clearly significant parts of the process. Here’s the system that Google has been developing for reviewing incredibly complex code—the Linux kernel.

Toward automated verification of unreviewed AI-generated code

AI, Code Review, Software Engineering

I’ve been wondering what it would take for me to use unreviewed AI-generated code in a production setting.

To that end, I ran an experiment that has changed my mindset from “I must always review AI-generated code” to “I must always verify AI-generated code.” By “review” I mean reading the code line by line. By “verify” I mean confirming the code is correct, whether through review, machine-enforceable constraints, or both.

Source: Toward automated verification of unreviewed AI-generated code, Peter Lavigne

Code generation was never the bottleneck—that’s a refrain we hear daily from people skeptical about using LLMs for software engineering. So what are the other bottlenecks? One is verification—ensuring that the generated code is of sufficient quality. We’ve long had many techniques for doing this as humans: code reviews. But much of it is automated with linters, compilers, and test suites. A really important question any software engineer should ask is: what signals would make me feel comfortable accepting code? And we think that’s not a one-and-done answer.

202603

We’ve all heard of those network effect laws: the value of a network goes up

with the square of the number of members. Or the cost of communication goes

up with the square of the number of members, or maybe it was n log n, or

something like that, depending how you arrange the members. Anyway doubling

a team doesn’t double its speed; there’s coordination overhead. Exactly how

much overhead depends on how badly you botch the org design.But there’s one rule of thumb that someone showed me decades ago, that has

stuck with me ever since, because of how annoyingly true it is. The rule

is annoying because it doesn’t seem like it should be true. There’s no

theoretical basis for this claim that I’ve ever heard. And yet, every time I

look for it, there it is.Every layer of approval makes a process 10x slower

Source: 202603, apenwarr

This detailed essay has at its heart a belief about systems—that each layer of approval makes a process ten times slower. This may be empirically true, even for software development, but it is contingent. Much of this essay would have made more sense a year ago when we focused on the idea of large language models as code generators while keeping everything else about the software engineering process unchanged. But that’s not what’s happening. Quality assurance and verification are increasingly something we can rely on—or that systems can do. Formal verification techniques, which have been used for decades but relied on a tiny number of extremely capable experts, are becoming increasingly tractable to large language models. The challenge when new and transformative technologies emerge is not to see their obvious application, but their broader application. We’ve focused a lot on code generation with these technologies over the last three or four years, but that’s not the only place in the software engineering process where they are already having, and will increasingly have, an impact.

AI AGENTS & WEB INFRASTRUCTURE

WebMCP for Beginners

Raise your hand if you thought WebMCP was just an MCP server. Guilty as charged. I did too. It turns out it’s a W3C standard that uses similar concepts to MCP. Here’s what it actually is.

WebMCP is a way for websites to define actions that AI agents can call directly.

Source: WebMCP for Beginners, Block (Goose)

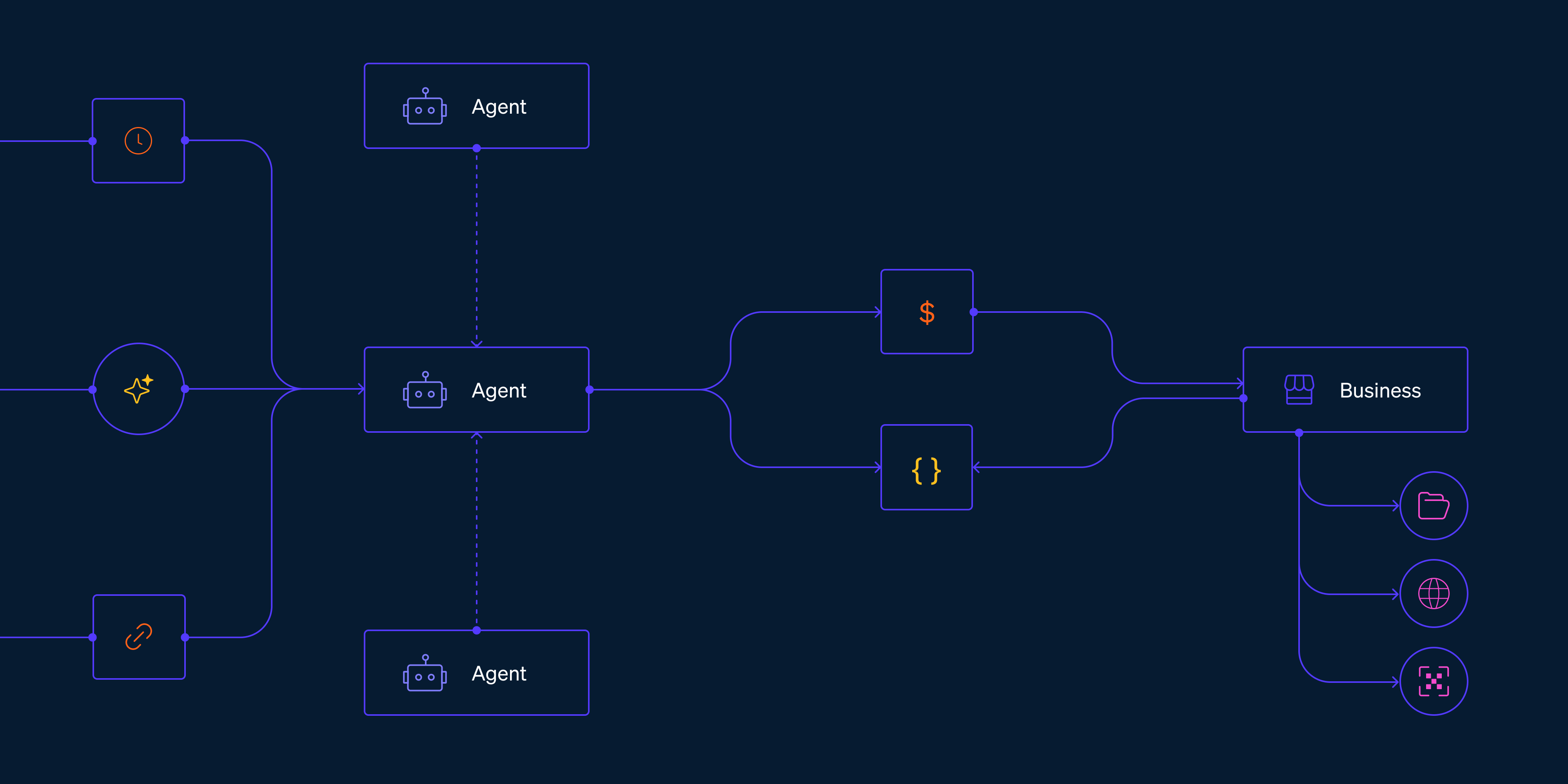

We’re increasingly of the opinion that for many websites, agents matter more than humans as users. But if you’ve tried to use many websites with an agent, you’ll find it can be very challenging. So there are a number of emerging standards and patterns for making sites more usable by agents. One of those is WebMCP, which Rizel Scarlett gives a great introduction to here.

Introducing the Machine Payments Protocol

However, the tools of the current financial system were built for humans, so agents struggle to use them. Making a purchase today can require an agent to create an account, navigate a pricing page, choose between subscription tiers, enter payment details, and set up billing—steps that often require human intervention.

To help eliminate these challenges, we’re launching the Machine Payments Protocol (MPP), an open standard, internet-native way for agents to pay—co-authored by Tempo and Stripe. MPP provides a specification for agents and services to coordinate payments programmatically, enabling microtransactions, recurring payments, and more.

Source: Introducing the Machine Payments Protocol, Stripe Blog

An increasingly significant percentage of all the visitors to your site will not be people but will be agents. This proposed new protocol for agent payments from Stripe and others is the plumbing we will need to enable that.

What is agentic engineering?

AI, AI Engineering, Coding Agent, Software Engineering

I use the term agentic engineering to describe the practice of developing software with the assistance of coding agents.

What are coding agents? They’re agents that can both write and execute code. Popular examples include Claude Code, OpenAI Codex, and Gemini CLI.

What’s an agent? Clearly defining that term is a challenge that has frustrated AI researchers since at least the 1990s but the definition I’ve come to accept, at least in the field of Large Language Models (LLMs) like GPT-5 and Gemini and Claude, is this one:

Source: What is agentic engineering?, Simon Willison’s Weblog

A new chapter from Simon Willison’s agentic engineering patterns looks at the definition of agentic engineering and the nature of agents in this context.

andrewyng/context-hub

Coding agents hallucinate APIs and forget what they learn in a session. Context Hub gives them curated, versioned docs, plus the ability to get smarter with every task. All content is open and maintained as markdown in this repo — you can inspect exactly what your agent reads, and contribute back.

Source: andrewyng/context-hub, GitHub

The legendary Andrew Ng asks: ‘Should there be a Stack Overflow for AI coding agents to share learnings with each other?’ And that’s what he is building here.

THE FUTURE OF SOFTWARE

Software Will Stop Being a Thing

AI, Economics, Software Engineering

A thoughtful essay made the rounds recently, arguing that AI-assisted coding splits the software world into three tiers. Tech companies at the top, where senior engineers review what AI produces. Large enterprises in the middle, buying platforms with guardrails and bringing in fractional senior expertise. And small businesses at the bottom, served by a new kind of local developer, a “software plumber” who builds custom tools at price points that finally make sense.

Source: Software Will Stop Being a Thing, Utopai

An unspoken assumption in all the conversation about the impact of AI on software engineering is that we will continue to develop software artifacts—apps, if you will—just more quickly, more efficiently, and more cheaply. But perhaps, as the author argues here, that won’t be the case.

Apple Quietly Blocks Updates for Popular ‘Vibe Coding’ Apps

Apple has quietly blocked AI “vibe coding” apps, such as Replit and Vibecode, from releasing App Store updates unless they make changes, The Information reports.

Apple told The Information that certain vibe coding features breach long-standing App Store rules prohibiting apps from executing code that alters their own functionality or that of other apps. Some of these apps also support building software for Apple devices, which may have contributed to a recent surge in new App Store submissions and, in some cases, slower approval times, according to developers.

Source: Apple Quietly Blocks Updates for Popular ‘Vibe Coding’ Apps, MacRumors

Earlier, we wrote a piece on how we believe software democratization is fundamentally shiftingthe relationship between people and technology—as significant as the shift from desktop to mobile. Software will be created by everyone, often ephemeral, shared like content, discovered through social and algorithmic channels. Web technologies will be the substrate for most of this creation, because the web is the only platform open enough to support it. Mobile platforms, which dominate our digital lives, were built for a different world. They assume software is a product made by professionals and distributed through official channels. They assume users need protection from the complexity of software. They assume gatekeeping is a feature, not a bug. These assumptions, however well intentioned when formulated nearly two decades ago, are now antiquated. The platforms built on them—for all their current dominance—may find themselves on the wrong side of a generational shift in how software gets made, shared, and used. It seems we’re starting to get some answers to that question.

porting software has been trivial for a while now. here’s how you do it.

This one is short and sweet. if you want to port a codebase from one language to another here’s the approach:

The key theory here is usage of citations in the specifications which tease the file_read tool to study the original implementation during stage 3. Reducing stage 1 and stage 2 to specs is the precursor which transforms a code base into high level PRDs without coupling the implementation from the source language.

Source: porting software has been trivial for a while now. here’s how you do it., Geoff Huntley

Recently we’ve covered articles talking about recreating open source software using an AI cleanroom approach that provides a like-for-like replacement with a different license. Here Geoff Huntley shows how trivial it is to do so.

AI ECONOMICS & WORKFORCE

The Great Turnover: 9 in 10 Companies Plan To Hire in 2026, Yet 6 in 10 Will Have Layoffs

AI, Economics, Software Engineering

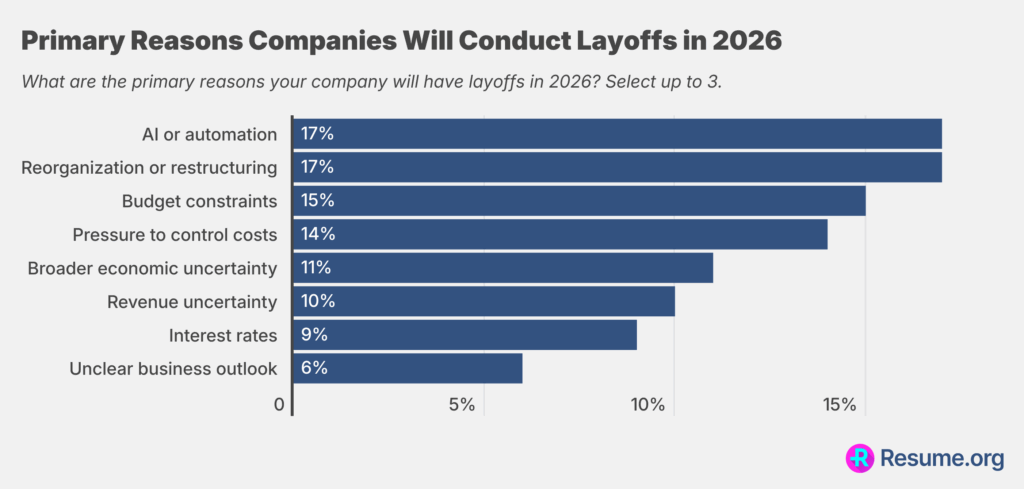

Resume.org’s latest survey of 1,000 U.S. hiring managers found that:

AI is influencing staffing decisions, but most companies aren’t experiencing the dramatic job replacement narrative that often gets pushed. Only 9% say AI has fully replaced certain roles, while nearly half (45%) say it has partially reduced the need for new hires, suggesting companies are using AI more as a hiring slowdown tool than a true workforce substitute. At the same time, 45% report that AI has had little to no impact on staffing levels, underscoring the uneven and limited effect it actually has across organizations.

Many companies admit they frame layoffs or hiring slowdowns as AI-driven because it plays better with stakeholders than saying the real reason is financial constraints. Nearly 6 in 10 companies report doing this, including 17% that claim to do it exactly, and 42% that say they do it somewhat.

As has been observed elsewhere, it’s probably sensible to take the explanation that significant job reductions in companies have been driven by AI with a grain of salt.

A couple of days ago, I sat down with Vivek Bharathi and dumped my brains. Here’s the interview…

AI, Economics, Software Engineering

Below you’ll find an AI transcription of everything we riffed about.

Key distinction: Software Development vs. Software Engineering:

Here, Geoff Huntley writes up key points from his recent conversation with Vivek Bharathi.

SOFTWARE ENGINEERING PRACTICE

Grace Hopper’s Revenge

The world of software has lots of rules and laws. One of the most hilarious is Kernighan’s Law:

Debugging is twice as hard as writing the code in the first place. Therefore, if you write the code as cleverly as possible, you are, by definition, not smart enough to debug it.

In the past, humans have written, read, and debugged the code.

Now LLMs write code, humans read and debug. (And LLMs write voluminous mediocre code in verbose languages.)

Humans will do less and less. LLMs will write code, debug, and manage edge cases. LLMs will verify against human specifications, human audits, human requirements. And humans will only intervene when things are misaligned. Which they can see because they have easy verification mechanisms.

Source: Grace Hopper’s Revenge, The Furious Opposites

This essay by Greg Olsen considers the impact of large language models on software engineering, and in particular, the programming languages that work best with large language models. We wonder how much longer we’re going to be particularly concerned with questions like that—and when we’ll start seeing the emergence of programming languages and patterns and paradigms that are LLM-first.

If you thought the speed of writing code was your problem – you have bigger problems

The core idea is the Theory of Constraints, and it goes like this:

Every system has exactly one constraint. One bottleneck. The throughput of your entire system is determined by the throughput of that bottleneck. Nothing else matters until you fix the bottleneck.

The challenge with Andrew Murphy’s very thoughtful piece—and he’s someone with an immense amount of engineering and leadership experience—is that, like a piece we quoted earlier, he’s analyzing an existing system, identifying one aspect of it (code generation), and reasoning about what happens if that changes but nothing else does. But large language models and agentic systems are not simply increasing the cadence of code generation. They’re impacting all of the software development lifecycle, creating a much more complex system that we’re trying to reason about.

Great reading, every weekend.

We round up the best writing about the web and send it your way each Friday.