I see dead people. Your weekend reading from Web Directions

This week I’ve published a piece that tries to bring together a lot of what I’ve been thinking about in terms of the clearly rapidly increasing capability of not just frontier language models but the software ecosystems around them, and some of the first-order impacts these might have in coming weeks and months. I See Dead People uses an analogy from The Sixth Sense where the chief protagonist doesn’t realise that he is dead. And I use that as a metaphor for perhaps all of us really — that we don’t realise our old self, the one that spent years and decades becoming a software engineer or a lawyer or a designer, is kind of dead. Not just individuals but entire significant organisations, and perhaps more as well. It might sound hyperbolic — so many things do these days — but hopefully you’ll find it in some way interesting and valuable.

This week we also hosted the first Homebrew Agents Club, an idea that came together very quickly (so quickly that two attendees turned up the following day) and brought together 15 to 20 people in, of all places, ironically a brewery (not a home brewery) in Sydney where people showcased work they were doing. We got essentially the world premiere of Flo.Monster, an amazing piece of technology developed by a long-time friend of Web Directions and of me, Rob Manson. Think of it as like OpenClaw but entirely running within your browser, and you can go and use it today. Just bring your API key from your favourite model hoster of choice, and start experimenting.

Homebrew Agents Club also allowed you to bring your agent along — to connect not just with the people, but most importantly with other agents participating. Not one, but two people who heard about the event couldn’t make it and jokingly asked whether their agent could attend in their place, so I went and built that. It was a very interesting experiment, and I’m going to continue to work on it. There are obviously some very serious potential security implications, so we worked on some sanitisation and security ideas, incorporating some ideas from the Agent-to-Agent protocol. We discovered that agents need to be encouraged to continue to be present and participate in a conversation. They’re quite reactive still, and so when an agent converses with a human, it’s we humans that tend to keep that conversation going. But when agents talk to other agents, there tends to be an initial flurry which tapers off. So we thought a lot about how we can encourage agents to keep those conversations going.

We’ve already had interest from other folks to run their own Homebrew Agents Club. If you’re elsewhere in the world, take a look at the site, and I’d love to hear from you!

Alright, this week I have read so many articles, listened to so many podcasts — I’ve got open tabs everywhere. But finding the time to convert these into our little “Elsewhere” clips with some thoughts from me has proved illusory. I still managed to get together half a dozen articles or so that I think could be valuable for you.

I hope you enjoy them and find them useful!

AI & Software Engineering

8 Agentic Coding Trends Shaping Software Engineering in 2026

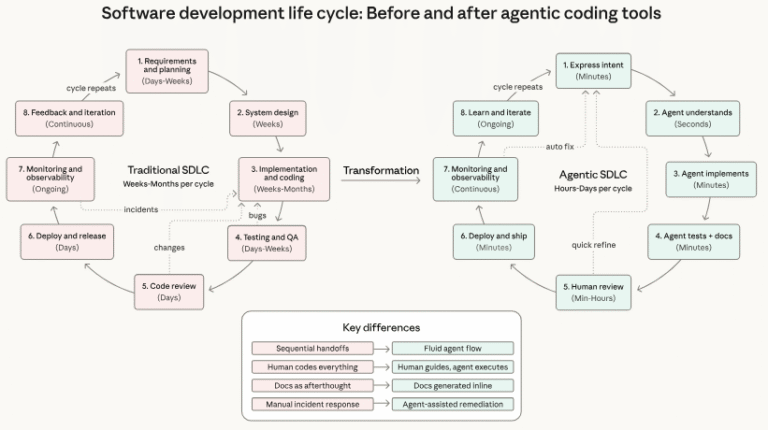

Software teams are under pressure to ship faster, but most AI coding tools still handle only narrow slices of the job rather than full builds — a pattern the next wave of agent systems is expected to change, according to Anthropic’s 2026 Agentic Coding Trends Report.

The report examines how AI coding agents are starting to move beyond one-off assistance into broader implementation roles across the software development lifecycle. Based on customer deployments and internal research, Anthropic identifies eight trends that it says will shape how engineering work is organised over the coming year.

Source: 8 Trends Shaping Software Engineering in 2026 — TESSL

The rate at which the agentic software engineering landscape is changing has only been accelerating. Predicting what might occur over a period of 12 months or so might seem like a fool’s errand, but I think it’s important to consider what might happen and how we might respond to it. This report from TESSL covers some of the things they think might happen in this space over the rest of the year.

Deep Blue

Becoming a professional software engineer is hard. Getting good enough for people to pay you money to write software takes years of dedicated work. The rewards are significant: this is a well compensated career which opens up a lot of great opportunities.

It’s also a career that’s mostly free from gatekeepers and expensive prerequisites. You don’t need an expensive degree or accreditation. A laptop, an internet connection and a lot of time and curiosity is enough to get you started.

The idea that this could all be stripped away by a chatbot is deeply upsetting.

Source: Deep Blue — Simon Willison

Annie Vella wrote something that resonated with a lot of people in March 2025 — The Software Engineering Identity Crisis. She spoke about how the identity of many software engineers was rooted in the act of writing code, and it resonated widely, seeing widespread reposting and appearing on various high-profile software engineering–focused podcasts. Here, Simon Willison addresses something similar and talks about the concept of “Deep Blue” — the ennui felt by software engineers as the central part of their identity has rapidly become less relevant.

Finding Comfort in the Uncertainty

About 40 of us – practitioners, researchers, technical leaders from around the world – gathered in Deer Valley, Utah, for an invite-only retreat on the future of software development. The event was hosted by Martin Fowler and Thoughtworks, and held in the same mountains where the Agile Manifesto was written 25 years ago – with some of the original signatories in the room. Not an attempt to reproduce that moment, but an acknowledgement that the ground has shifted enough to warrant the same kind of conversation.

The format was an unconference. No presentations. No agenda handed down from above. Participants proposed sessions, voted with their feet, and talked. Over 30 sessions ran in parallel – far more than any one person could attend. The ones I was in were fascinating, and comparing notes with others afterwards revealed just how much convergence there was across sessions I’d missed. A long way to go for two days – but worth every hour.

Source: Finding Comfort in the Uncertainty — Annie Vella

Recently, Martin Fowler — a giant in the field of software engineering and one of the originators of the Agile movement — hosted a symposium, for want of a better word, on the future of software engineering in this era of AI and large language models. Here Annie Vella, who will be speaking at our upcoming AI Engineer conference in Melbourne, gives her write-up of what she took away from this event. This is very much worth your time reading. Fowler himself has a write-up from his responses to the event.

AI Tools & Architecture

OpenClaw Architecture and Insights

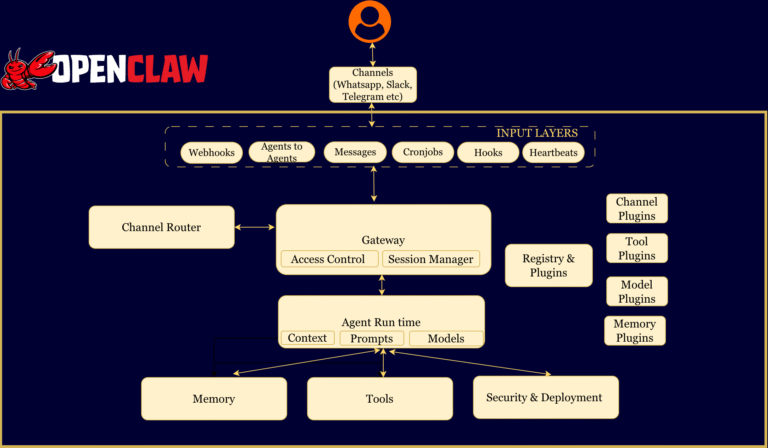

OpenClaw (a.k.a Claudbot) is an open-source personal AI assistant (MIT licensed) created by Peter Steinberger that has quickly gained traction with over 180,000 stars on GitHub at the time of writing this blogs. Lots of traction, internet buzz and usecases that evolve from here. I am going to cover something else, what makes this different and really powerful. Its the Architecture and how it was productised .

Source: OpenClaw Architecture and Insights — Techaways

OpenClaw has been a sensation like few others in the technology space in many years. Launched only back in November 2025, it is now the most-starred project ever on GitHub and incredibly widely used, at least by early adopters. It points in the direction of what future agentic AI systems might look and feel like — and how does it do its work? Well, it’s an open-source project, so we can inspect its inner workings, which is what Navant Tirupathi has done here. If you want to learn more about how OpenClaw works, read on.

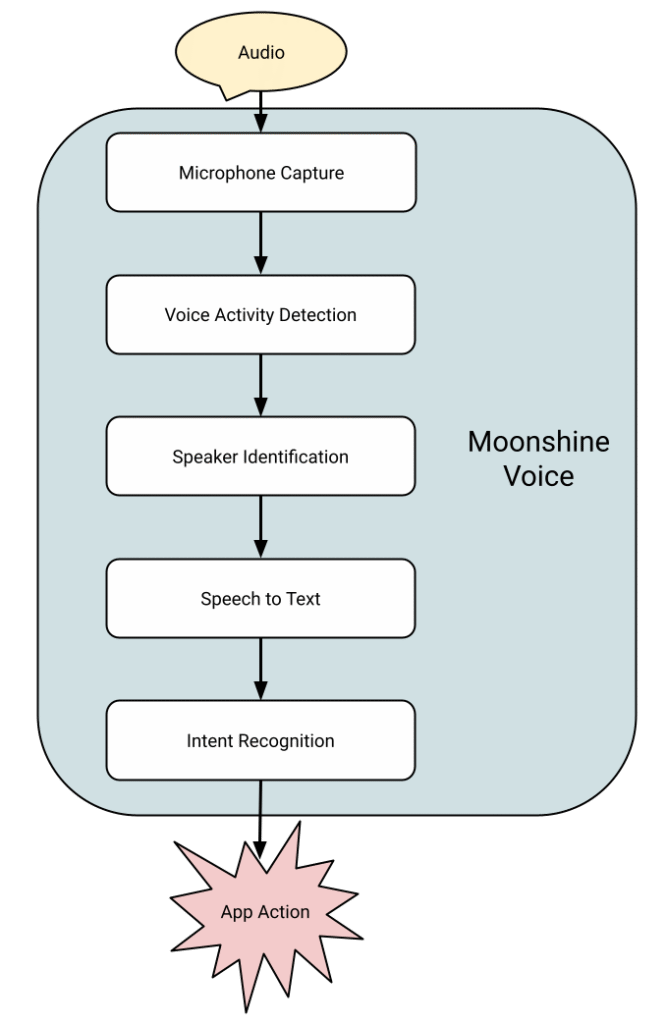

Announcing Moonshine Voice

Today we’re launching Moonshine Voice, a new family of on-device speech to text models designed for live voice applications, and an open source library to run them. They support streaming, doing a lot of the compute while the user is still talking so your app can respond to user speech an order of magnitude faster than alternatives, while continuously supplying partial text updates. Our largest model has only 245 million parameters, but achieves a 6.65% word error rate on HuggingFace’s OpenASR Leaderboard compared to Whisper Large v3 which has 1.5 billion parameters and a 7.44% word error rate. We are optimized for easy integration with applications, with prebuilt packages and examples for iOS, Android, Python, MacOS, Windows, Linux, and Raspberry Pis. Everything runs on the CPU with no NPU or GPU dependencies. and the code and streaming models are released under an MIT License.

Source: Announcing Moonshine Voice — Pete Warden’s Blog

Right now, most of the focus by software engineers, AI engineers, product people, and designers when it comes to AI is large language models. They’re clearly very capable, but they’re not the only approach to achieving really interesting outcomes. There are a whole bunch of traditional machine learning techniques that can often be very useful for things like semantic search. There are vector and graph databases, and there are also small models. Someone who has been at the forefront of this space for many years is Pete Warden. His company has just released a new family of small models for speech-to-text that run without the need for anything other than a CPU. I think they are a really good example of the kind of technologies we perhaps overlook that could be very valuable in the products we’re building.

AI & Society

The Left Is Missing Out on AI

“Somehow all of the interesting energy for discussions about the long-range future of humanity is concentrated on the right,” wrote Joshua Achiam, head of mission alignment at OpenAI, on X last year. “The left has completely abdicated their role in this discussion. A decade from now this will be understood on the left to have been a generational mistake.”

It’s a provocative claim: that while many sectors of the world, from politics to business to labor, have begun engaging with what artificial intelligence might soon mean for humanity, the left has not. And it seems to be right.

This idea, that large-language models merely produce statistically plausible word sequences based on training data, without having any idea about what the words refer to, has become the baseline across much of the left-intellectual landscape. Thanks to it, fundamental questions about AI’s capabilities, now and in the future, are considered settled.

Source: The Left Is Missing Out on AI — Dan Kagan-Kans, Transformer

I’m not entirely sure that the claim from Joshua Achiam at OpenAI is entirely true, but it may well be directionally so. Through mostly anecdotal evidence — people I follow on social media, events that I attend — I find folks who might broadly be called “the left” are much more likely to be resistant towards, and even antithetical toward, AI technologies. In some ways that’s not without reason, and I think there are genuinely very important conversations to be had about everything from the environmental impact to the eternal tension between labour and capital, and the enclosure of the intellectual commons that large language models in many ways represent. I think the observation that, by steadfastly and often increasingly irrationally or erroneously critiquing the technologies themselves, as Dan Kagan-Kans observes here (“just next-token prediction…”), simply deals you out of the conversation of what these technologies might look like and how they may be applied in the future.

Great reading, every weekend.

We round up the best writing about the web and send it your way each Friday.