A weekend reading from Web Directions

A couple of quick notes before this week’s huge crop of articles.

We’ve just announced the first round of keynote speakers for the upcoming AI Engineer Conference. I’m incredibly excited and proud of the program we are bringing together.

And speaking of programs, CFPs close tomorrow for AI Engineer and AI x Design. We’d love to hear your proposals!

And tickets are genuinely selling quickly for these events–so start planning now, They will sell out!

I increasingly publish and amplify things over on LinkedIn with the demise of Twitter. Meanwhile BlueSky and Mastodon, which I use quite a bit, are still in their infancy. I find for all its shortcomings, LinkedIn can be a very good platform for discovering and sharing ideas.

So if you don’t do so, please follow me over there. And if we’ve ever really connected at all, including at any of our conferences, please feel free to send a connection request. I rarely turn those down.

Hardware is ready for its moment

Last week, in less than a day, I programmed an M5Stack Core2 to be my personal recording assistant. I’d been using my iPhone to capture voice memos, then manually transferring the audio to my Mac and going through a series of largely manual steps to turn recordings into transcripts and ultimately summaries.

But in just a couple of hours I programmed a device with a programming language and an entire technology stack that I know next to nothing about. I integrated the hardware via Wi-Fi into my Mac ecosystem and took a process that was manual and clunky and made it effectively frictionless.

I think we’re about to see an explosion in bespoke hardware applications like this — small, purpose-built, AI-powered devices created by people who, until very recently, would never have considered themselves hardware developers. The same force that’s democratising software creation is coming for hardware. Scratch that — it’s not coming. It’s already here.

Read the full story at the Web Directions blog.

This week’s reading

AI & Agentic Coding

Harness Engineering

AI Software Engineering AI Engineering

It was very interesting to read OpenAI’s recent write-up on “Harness engineering” which describes how a team used “no manually typed code at all” as a forcing function to build a harness for maintaining a large application with AI agents. After 5 months, they’ve built a real product that’s now over 1 million lines of code.

Source: Harness Engineering – Exploring Gen AI, Martin Fowler

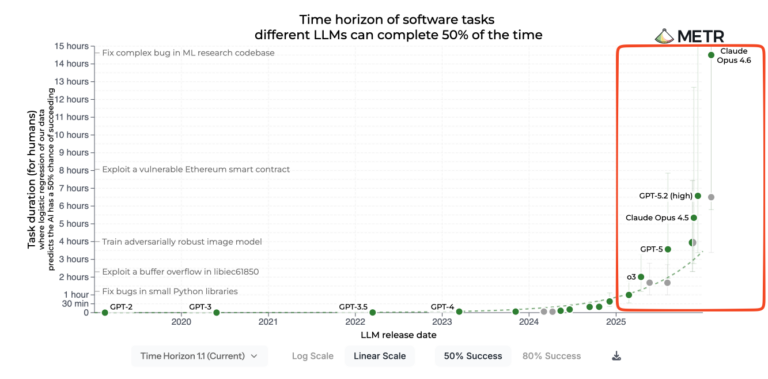

The consensus among people who’ve been working with code generation tools for some time is that something happened in November and December of 2025. Certainly, a big part of that was the arrival of Opus 4.5 from Anthropic and OpenAI’s GPT-5.2 models.

But we also saw, over the couple of months since then, the emergence of the system now known as OpenClaw, which itself relies on third-party models. It also relies on a brand new desktop application for coding from OpenAI, as well as the integration of Claude Code into their desktop apps and into the systems those apps are running on. Finally, there was the emergence of Claude Cowork.

The term “harness” has increasingly come to be applied to systems like this. They are logic tools and more, which then tap into the capability of large language models, but certainly strive to improve those models.

And while models—particularly major step-function improvements in them—take months or sometimes even years, these harnesses can be nearly continuously improved and indeed improve themselves by installing new skills or the equivalent.

It seems highly logical that we won’t simply rely on third-party harnesses. Many organisations or individuals may well build their own, perhaps tailored for specific use cases. One I’m exploring right now is the equivalent of Claude Cowork but for learning.

So I definitely think this is something developers should be paying attention to, and this piece from Birgitta Böckeler explores some of the implications, particularly within enterprise software engineering practice.

How We Rebuilt Next.js with AI in One Week

Last week, one engineer and an AI model rebuilt the most popular front-end framework from scratch. The result, vinext (pronounced “vee-next”), is a drop-in replacement for Next.js, built on Vite, that deploys to Cloudflare Workers with a single command. In early benchmarks, it builds production apps up to 4x faster and produces client bundles up to 57% smaller. And we already have customers running it in production.

This is not a wrapper around Next.js and Turbopack output. It’s an alternative implementation of the API surface: routing, server rendering, React Server Components, server actions, caching, middleware. All of it built on top of Vite as a plugin. Most importantly Vite output runs on any platform thanks to the Vite Environment API.

Source: How We Rebuilt Next.js with AI in One Week – Cloudflare Blog

This story has been getting quite a bit of traction in the last couple of days. It’s the story of how Cloudflare recreated Next.js from scratch using agentic coding systems for around $1,000 in tokens.

It’s a very significant example of the capabilities of modern agentic systems now.

But my instinct is this is a transitional step in our understanding of how to work with agentic coding systems. We’re still very wedded to the existing patterns and existing technology stacks that we use. But why not just abandon that whole layer that Next.js served?

Of course, in the short term, we require rewriting everything that sits on top of Next.js.

This way, that code base can be maintained, and the functionality provided by Next.js can be rewritten. It feels like only a matter of time before that step doesn’t make sense anymore, and the code Cloudflare has written on top of Next.js as well as Next.js itself can be entirely replaced with new code that operates at a lower level in terms of abstractions, reaching down directly toward the DOM and its APIs.

That’s a pattern I suspect we’ll see starting to emerge in the near future.

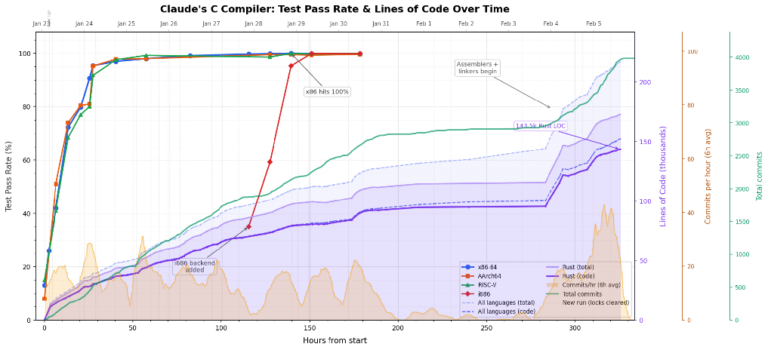

The Claude C Compiler: What It Reveals About the Future of Software

Compilers occupy a special place in computer science. They’re a canonical course in computer science education. Building one is a rite of passage. It forces you to confront how software actually works, by examining languages, abstractions, hardware, and the boundary between human intent and machine execution.

Compilers once helped humans speak to machines. Now machines are beginning to help humans build compilers.

Source: The Claude C Compiler: What It Reveals About the Future of Software – Modular

A number of recent software projects using code generation tools have seemed like watershed moments in the evolution of this technology. Recent browsers developed solely using agentic coding systems and now a C compiler show how far code generation has come in even recent months.

Here, Chris Lattner takes a deeper look at this project and the implications it has, and what it reflects about the state of agentic coding systems in early 2026.

WTF Happened in (December) 2025?

AI AI Engineering Coding Agent Software Engineering

A collection of datapoints in or around 2025 which we may look back as a historical inflection point. Open source & curated in realtime by Latent Space.

Source: WTF Happened in 2025? – Latent Space

I’m certainly not alone in thinking that something happened in late November or early December—the launch of a number of new frontier models and what we’re calling harnesses, whether it’s OpenClaw or a new version of Claude Code—and there was a step function in changing how capable all agentic coding systems became in that moment.

Here, Shawn Wang and the Latent Space folks have collected a series of moments to capture a sense of how this unfolded rapidly over a period of weeks.

OpenClaw Is the Most Fun I’ve Had with a PC in 50 Years

Opinion Fifty years ago this month, I touched a computer for the first time. It was an experience that pegged the meter for me like no other – until last week.

My first encounter happened in New England where the winters produce something close to cabin fever. My friend Bobby’s mother forced us out of his house one Saturday morning, packing us off with his dad, who had to spend the day at the office. Bobby seemed excited. I couldn’t see why.

Source: OpenClaw Is the Most Fun I’ve Had with a PC in 50 Years – The Register

Mark Pesce is a very long-term friend in the world of technology. We’re of a very similar vintage and, I think, heritage when it comes to computing. Very early, somewhat accidental adopters and non-stop users of these technologies for over 50 years—in my case, just a little bit less.

Here Mark talks about his first experience with computing and then his most recent revelatory one. I’ll admit to having a small part to play in this story. I badgered Mark after I first used Claude Code—now called OpenClaw—a few weeks ago, and had a moment like he describes. Pretty hard to describe why, but when he got himself up and running as he describes here, he had a moment like that too.

AI Engineering Patterns & Practices

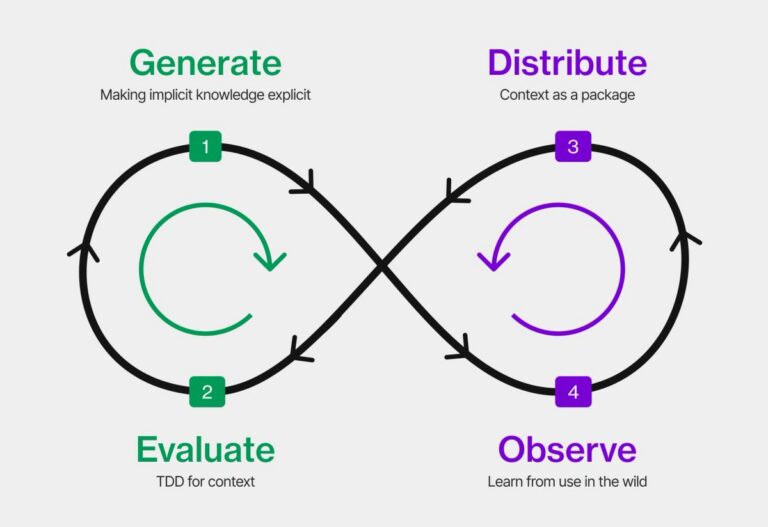

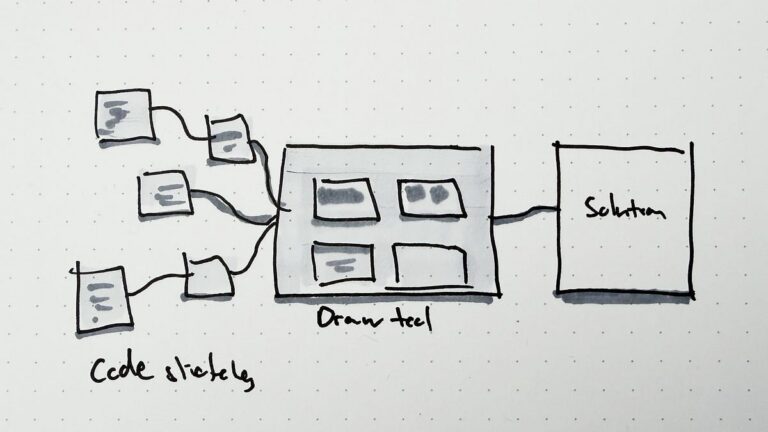

The Context Development Lifecycle: Optimising Context for AI Coding Agents

Why the next software development revolution isn’t about code, it’s about context.

We’ve spent decades perfecting how humans write code. We’ve built entire methodologies (waterfall, agile, DevOps, platform engineering) around the assumption that the bottleneck is getting working software from a developer’s head into production. But that assumption just broke.

Coding agents are writing code now. Not just autocompleting lines, but writing features, fixing bugs, scaffolding entire services. The bottleneck has shifted. It’s no longer about how fast we can write and ship code. It’s about how well we can describe what the code should do, why it should do it, and how it should behave in the messy reality of our systems.

This means developers have inherited a new responsibility: translating implicit organisational knowledge into something structured enough for another entity to act on. Making the implicit explicit, at the right level of detail, for an audience that takes everything literally. And if context is the new bottleneck, then we need a development lifecycle built around it. I think what’s emerging is a Context Development Lifecycle (CDLC), and it will reshape how we think about software development as profoundly as DevOps reshaped how we think about delivery and operations.

Source: The Context Development Lifecycle – Tessl

The question of whether or not coding agents can now write sophisticated production-ready code is over. The question is not whether they can, but how we now go about developing software as professional software engineers.

Here, Patrick Debois—the father of DevOps and long-time advocate of AI-assisted software development—considers what our role is becoming in this new era.

Writing Code Is Cheap Now – Agentic Engineering Patterns

The biggest challenge in adopting agentic engineering practices is getting comfortable with the consequences of the fact that writing code is cheap now.

Delivering new code has dropped in price to almost free… but delivering good code remains significantly more expensive than that.

For now I think the best we can do is to second guess ourselves: any time our instinct says “don’t build that, it’s not worth the time” fire off a prompt anyway, in an asynchronous agent session where the worst that can happen is you check ten minutes later and find that it wasn’t worth the tokens.

Source: Writing Code Is Cheap Now – Simon Willison

Simon Willison has, for several years now, been one of the people I pay most attention to when it comes to software engineering and AI.

Simon has been working with these technologies and—importantly—bringing back the lessons he’s learned and sharing those on a daily basis for years now, and his insights are pretty much unparalleled.

So I’m not the first to make the observation that code generation gives us permission to do things we may otherwise not start because we have no idea how long they would take.

As Simon concludes here, we have to get over our instincts as experienced software engineers, which say it’s not worth my time even exploring an idea, when exploring an idea can be measured in minutes, not hours or days.

Linear Walkthroughs – Agentic Engineering Patterns

AI Design Patterns Software Engineering

Sometimes it’s useful to have a coding agent give you a structured walkthrough of a codebase.

Maybe it’s existing code you need to get up to speed on, maybe it’s your own code that you’ve forgotten the details of, or maybe you vibe coded the whole thing and need to understand how it actually works.

Frontier models with the right agent harness can construct a detailed walkthrough to help you understand how code works.

Source: Linear Walkthroughs – Agentic Engineering Patterns, Simon Willison

Simon Willison is working on what he calls a “book-shaped” project to document agentic coding patterns.

Here he talks about how he gets systems to give him a linear walkthrough of the code that he has developed. He’s not doing line-by-line code reviews; instead, he gets a sense of how the system overall works.

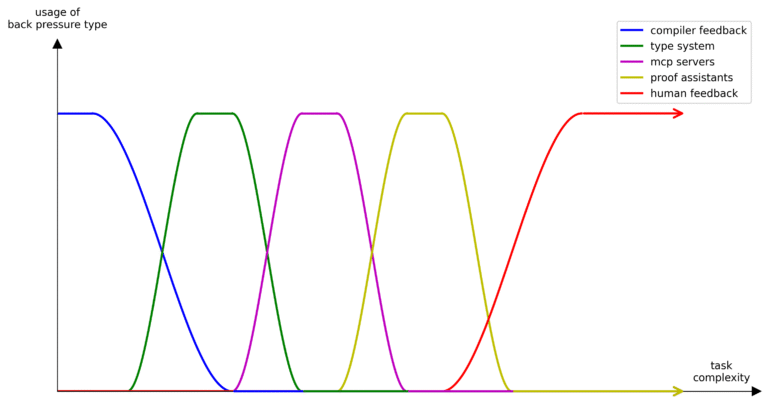

Don’t Waste Your Back Pressure

AI AI Engineering LLMs Software Engineering

You might notice a pattern in the most successful applications of agents over the last year. Projects that are able to setup structure around the agent itself, to provide it with automated feedback on quality and correctness, have been able to push them to work on longer horizon tasks.

This back pressure helps the agent identify mistakes as it progresses and models are now good enough that this feedback can keep them aligned to a task for much longer. As an engineer, this means you can increase your leverage by delegating progressively more complex tasks to agents, while increasing trust that when completed they are at a satisfactory standard.

Source: Don’t Waste Your Back Pressure – Moss Ebeling

Here, Moss Ebeling talks about an emerging pattern that can apply to software engineering but really, potentially, any use of large language models. Backpressure is the idea of feedback to an agentic system on its output that can then be fed back into the system in a loop to improve the quality of its output. Think of the Ralph Wiggum technique of friend of Web Directions Geoff Huntley as an example of this.

My instinct is this pattern will become increasingly important for AI engineers, not just when it comes to code production, but much more broadly.

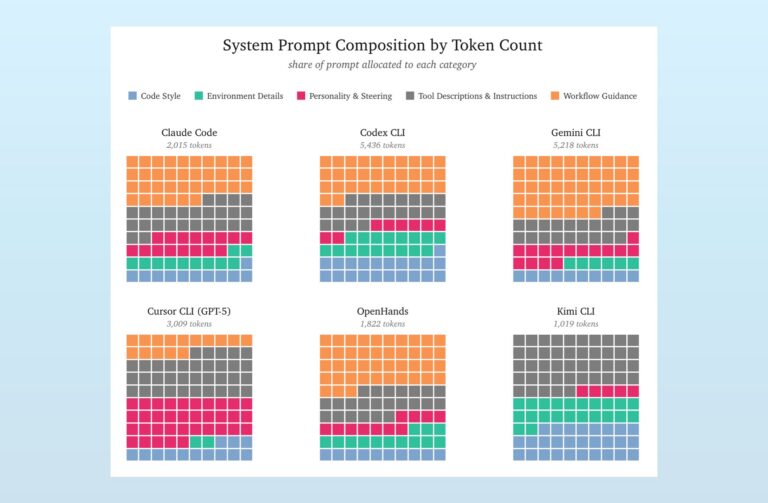

How System Prompts Define Agent Behaviour

Coding agents are fascinating to study. They help us build software in a new way, while themselves exemplifying a novel approach to architecting and implementing software. At their core is an AI model, but wrapped around it is a mix of code, tools, and prompts: the harness.

A critical part of this harness is the system prompt, the baseline instructions for the application. This context is present in every call to the model, no matter what skills, tools, or instructions are loaded. The system prompt is always present, defining a core set of behaviors, strategies, and tone.

Source: How System Prompts Define Agent Behaviour – Drew Breunig

Prompts may not be quite the hot topic they were three or so years ago when prompt engineering was the hot new role, but they still play a critical role in AI engineering—and not just for engineers using models, but actually for the model developers themselves.

Here is a breakdown of the system prompts—that is, the prompts added to your own prompts when calling various models—and an analysis of the role they play in agentic systems.

Software Engineering & the AI Transition

The Final Bottleneck

But to me it still feels different. Maybe that’s because my lowly brain can’t comprehend the change we are going through, and future generations will just laugh about our challenges. It feels different to me, because what I see taking place in some Open Source projects, in some companies and teams feels deeply wrong and unsustainable. Even Steve Yegge himself now casts doubts about the sustainability of the ever-increasing pace of code creation.

So what if we need to give in? What if we need to pave the way for this new type of engineering to become the standard? What affordances will we have to create to make it work? I for one do not know. I’m looking at this with fascination and bewilderment and trying to make sense of it.

Source: The Final Bottleneck – Armin Ronacher

Armin Ronacher is a well-known open-source software developer with many years of experience. Over the last year, he’s moved from scepticism toward code generation tools to embracing them.

But here he ponders what happens when humans can’t keep up with verifying the quality of code that’s produced and become a significant bottleneck in its production. Do we, he asks, need new approaches to software engineering? It seems to me almost certainly so. But what they are—their foundational patterns—are only now beginning to emerge.

StrongDM Software Factory

We built a Software Factory: non-interactive development where specs + scenarios drive agents that write code, run harnesses, and converge without human review.

The narrative form is included below. If you’d prefer to work from first principles, I offer a few constraints & guidelines that, applied iteratively, will accelerate any team toward the same intuitions, convictions1, and ultimately a factory2 of your own. In kōan or mantra form:

Source: StrongDM Software Factory

Here, the folks at StrongDM outline their thesis and approach to building a software practice where two key features are: humans do not write a line of code, and humans do not review a line of code. So how can this possibly work? That’s what they discuss here.

AI Models & Infrastructure

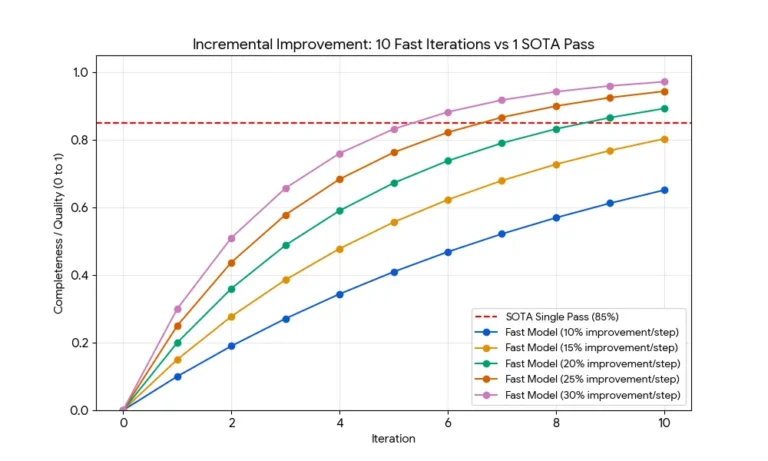

Mercury 2 Won’t Outthink Frontier Models but Diffusion Might Out-Iterate Them

Inception Labs have just released Mercury 2 – their latest diffusion large language model and it’s pretty solid. This goes beyond a technical proof of concept and into the realms of something that is genuinely interesting as well as practically useful.

To my mind, this is the first “prime time” diffusion language model that’s viable for developers. One that is blisteringly fast at text generation and powerful enough to do real work – especially for coding, which I’ll get to in a moment.

Source: Mercury 2 Won’t Outthink Frontier Models but Diffusion Might Out-Iterate Them – Andrew Fisher

Standard, state-of-the-art models like those from Anthropic and OpenAI are autoregressive models which work by predicting the next token—an approach that has led to some extraordinary results but has a significant performance bottleneck.

A different approach called diffusion is what’s behind image generation models. And it works by, in a nutshell, generating tokens between other tokens rather than simply predicting the one that comes at the end.

There’s been an increasing amount of work put into using a diffusion approach for text-based models. And here Andrew Fisher looks at Mercury 2, a new diffusion model, and how even being less capable than frontier models, its dramatically improved performance might close the gap in many programming applications.

mesh-llm — Decentralised LLM Inference

Turn spare GPU capacity into a shared inference mesh. Serve many models across machines, run models larger than any single device, and scale capacity to meet demand. OpenAI-compatible API on every node.

Source: mesh-llm — Decentralised LLM Inference – Michael Neale

Long, long-time friend of Web Directions, Mike Neale has a new project that allows for distributed inference where you and others can share a small amount of your compute to run inference for open-source models.

You can try it now. You can share your own resources or take advantage of others’.

AI Security

The Promptware Kill Chain

AI AI Engineering Security Software Engineering

Attacks against modern generative artificial intelligence (AI) large language models (LLMs) pose a real threat. Yet discussions around these attacks and their potential defenses are dangerously myopic. The dominant narrative focuses on “prompt injection,” a set of techniques to embed instructions into inputs to LLM intended to perform malicious activity. This term suggests a simple, singular vulnerability. This framing obscures a more complex and dangerous reality. Attacks on LLM-based systems have evolved into a distinct class of malware execution mechanisms, which we term “promptware.” In a new paper, we, the authors, propose a structured seven-step “promptware kill chain” to provide policymakers and security practitioners with the necessary vocabulary and framework to address the escalating AI threat landscape.

Source: The Promptware Kill Chain – Lawfare

Anybody who works as a software engineer with large language models is at least peripherally aware of the novel security challenges that they can present, perhaps best summarised as the “Lethal Trifecta”—a term Simon Willison coined for the dangerous combination of prompt injection, access to sensitive data, and the ability for an LLM to take actions.

Here the legendary Bruce Schneier and collaborators detail the “Promptware Kill Chain”, and how attacks on LLM-based systems can escalate dramatically.

Design & Prototyping

Sketching with Code

Sketching with code is a different mode, but it feels natural to me. I can often draw faster on a keyboard than with a stylus. Over the years, I developed a physical sketching system—symbols, color coding, hierarchy through line weight—as a shorthand to accelerate ideation. Sketching with code is the programmatic extension of that same system. The shorthand I built for pen and paper trained my instinct for what to feed the machine.

Source: Sketching with Code – Proof of Concept, David Hoang

How to approach prototyping in the early stages of UI design? These have been contentious issues amongst design professionals for a long time now. Do high-fidelity early-stage designs tend to lock us into specific approaches before we’ve properly explored the space of possibility?

Now we can develop working prototypes in a matter of minutes. How does the design process change? How do we adapt to that? Here David Hoang talks about his design process and how he incorporates code generation into it.

Great reading, every weekend.

We round up the best writing about the web and send it your way each Friday.