Your weekend reading from Web Directions

With the Easter long break here in Australia last week, we didn’t have a newsletter for the first time in quite a while–so hopefully we’re making up for it this week.

Before we begin with this week’s reading some news about upcoming events and more form Web Directions.

PROJECT NOOPS

As I mentioned a couple of weeks back, Mark Pesce and I have teamed up to parse the signals out of the AI transformation as it happens at Noops. Read more and sign up for free.

AI ENGINEER NIGHT SYDNEY

Last night over 150 people attended our AI Engineer Night in Melbourne. If you’re in Sydney you get the chance to have a taste of what’s coming up at the AI Engineer Conference next week on Thursday.

It’s free, but places are limited so please RSVP!

AI ENGINEER UNCONFERENCES

Also taking place in Sydney (April 18th) and Melbourne (April 11th–that’s tomorrow), AI Engineer unconferences will explore the key ideas associated with the AI Engineer Conference series. From the impact of AI on the software development process and profession to the opportunities emerging with AI for new products and services. Whether it’s agents like OpenClaw or Enterprise Workloads, you set the agenda.

Again, AI Engineer Unconferences are free; just RSVP so we can plan best for the day

AI ENGINEER AND AI X DESIGN WILL SELL OUT!

Tickets for AI Engineer and AI x Design are selling incredibly well–so don’t delay! Save hundreds on a full priced ticket (full pricing kicks in May 8th) and most importantly make sure you don’t miss out!

THE WINCHESTER MYSTERY HOUSE ERA

Drew Breunig has given us the image we needed for where software engineering is right now: the Winchester Mystery House. Eric Raymond’s 1998 distinction between cathedrals and bazaars shaped a quarter-century of thinking about how software gets built. But AI has introduced a third mode—idiosyncratic, sprawling, cobbled-together systems that work but follow no coherent architectural plan. It’s a vivid metaphor, and the uncomfortable part is that it’s already accurate.

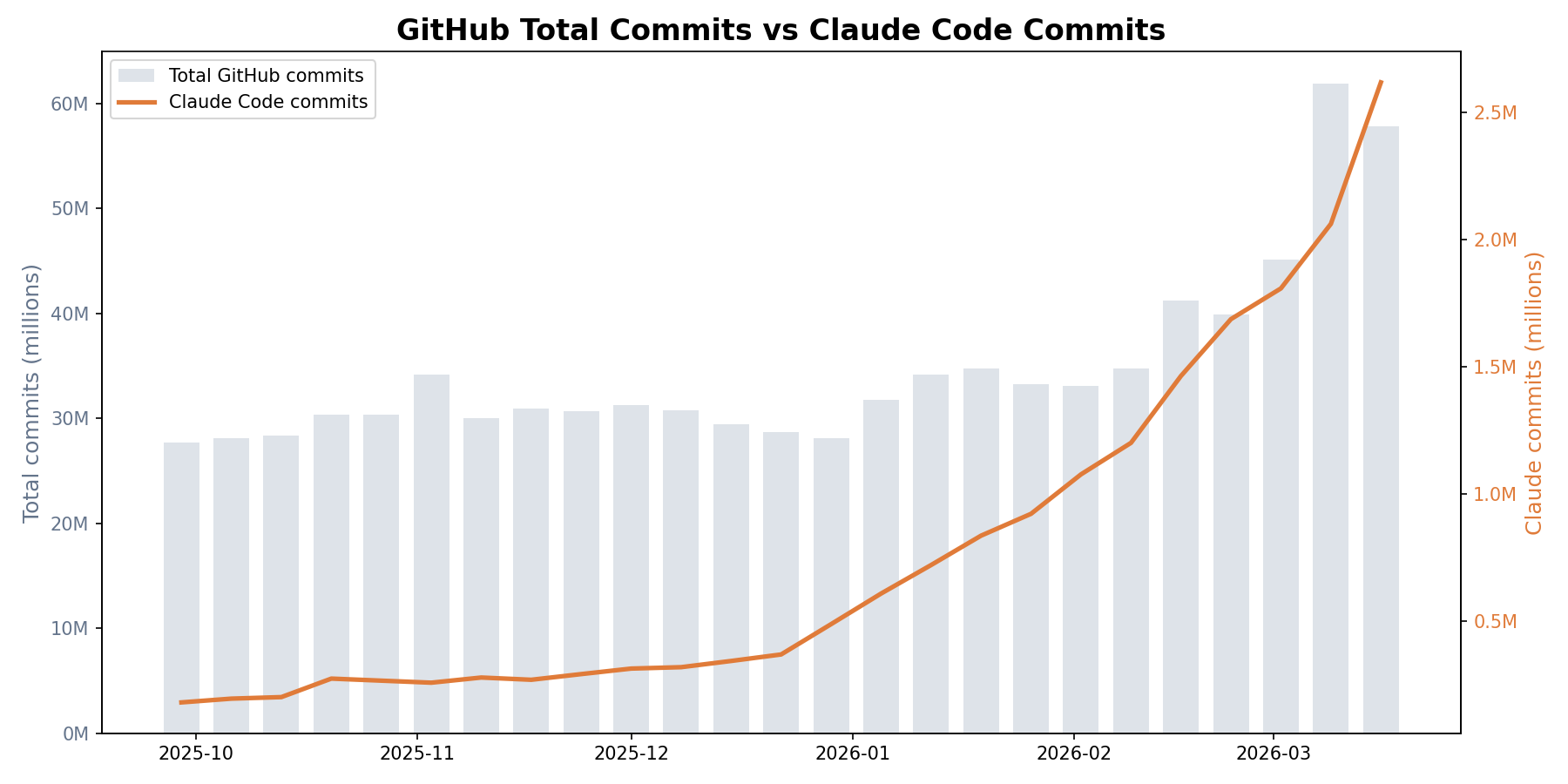

The numbers tell the story. Paul Kinlan’s analysis of GitHub commit data shows Claude Code alone went from 0.7% to 4.5% of all public GitHub commits in six months. All AI coding tools combined now account for roughly 5% of every public commit. That’s not a trend line—it’s a phase change happening in plain sight.

And it’s not just volume. Two of this fortnight’s stories illustrate the kind of output this tooling enables. Lalit Maganti spent 250 hours over three months building syntaqlite—a set of SQLite devtools he’d wanted for eight years but could never justify until AI coding agents changed the economics. What makes his account especially valuable is the systematic honesty: he breaks down where AI helped and where it was detrimental, which is far more useful than the one-shot miracle stories. Reco’s team rewrote their JSONata pipeline in Goin seven hours for $400 in tokens, borrowing a methodology from Cloudflare’s Next.js reimplementation—point AI at an existing test suite and have it implement code until every test passes. The result was a 1,000x speedup and $500K/year in savings.

These aren’t toy projects. They’re production systems built by experienced engineers who understood the problem domain deeply enough to direct AI effectively. And that qualifier—”deeply enough to direct AI effectively”—is becoming the central question of software engineering practice.

Rahul Garg’s piece on encoding team standards makes this concrete. He observes that a senior engineer instinctively specifies architectural constraints, coding patterns, and security requirements when directing AI—while a junior developer asks for “a notification service” and gets whatever the model defaults to. Same codebase, same AI, completely different quality. His solution—treating AI instructions as versioned, shared infrastructure that encodes tacit team knowledge—is exactly the kind of systems thinking the Winchester Mystery House era needs. Without it, you get sprawl. With it, you get something closer to a cathedral, even if the construction crew is partly artificial.

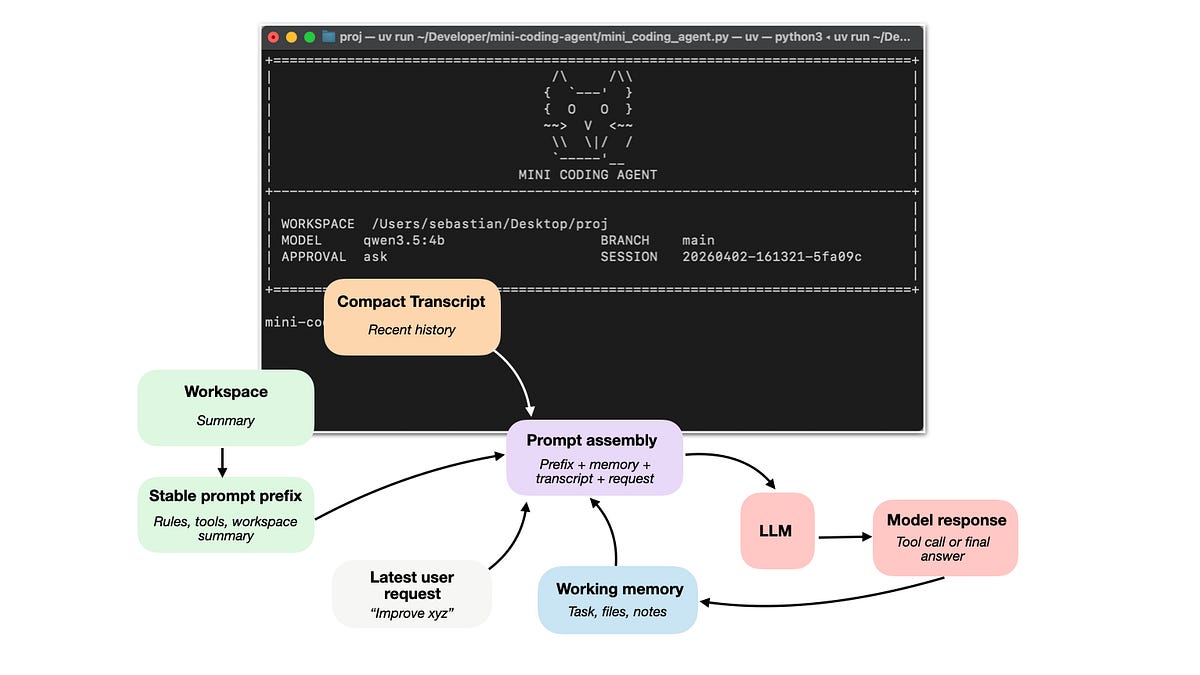

For those who want to understand the machinery beneath all of this, Sebastian Raschka’s anatomy of coding agents is an excellent starting point. His key insight is that much of the recent progress in practical LLM systems is not about better models but about how we use them—tool use, context management, and memory play as much of a role as the model itself. It’s why Claude Code or Codex feel significantly more capable than the same underlying models in a plain chat interface. And Sam Rose’s extraordinary visual essay on quantization complements this nicely, explaining how we can strip away three-quarters of a model’s numerical precision and barely dent its capability—which is why increasingly powerful models are running on hardware that would have seemed absurdly inadequate a year ago.

The complicating perspective this fortnight comes from MC Dean’s experiment building a design team out of AI agents. The discourse around AI and software engineering tends to treat “building with AI” as synonymous with “generating code.” But Dean is exploring what AI means for design as a practice—building shared skills, creating agent teams, asking what it means when your collaborators aren’t human. It’s a reminder that the transformation underway isn’t confined to writing code. The Winchester Mystery House metaphor applies to design, too, and every other domain where AI is making production cheap while leaving judgment expensive.

What to watch: the gap between teams that treat AI as a code autocomplete and teams that build systematic infrastructure around it—encoded standards, architectural thinking, proper observability—is about to become the defining competitive divide. The Winchester Mystery House is the default outcome. The question is whether your team is building something more deliberate.

AI & THE STATE OF SOFTWARE ENGINEERING

The Cathedral, the Bazaar, and the Winchester Mystery House

In 1998, Eric S. Raymond published the founding text of open source software development, The Cathedral and the Bazaar. In it, he detailed two methods of building software:

The ideas crystallized in The Cathedral and the Bazaar helped kick off a quarter-century of open source innovation and dominance.

But just as the internet made communication cheap and birthed the bazaar, AI is making code cheap and kicking off a new era filled with idiosyncratic, sprawling, cobbled-together software.

Meet the third model: The Winchester Mystery House.

Source: The Cathedral, the Bazaar, and the Winchester Mystery House, O’Reilly

Drew Breunig, who has done as much as anyone to explore and popularise the ideas around context engineering, reflects on the current state of software engineering through the lens of a classic late-1990s piece, The Cathedral and the Bazaar. He argues we are now seeing a third approach—the Winchester Mystery House approach to software engineering. It’s important to try to understand and reason about the profound transformations happening within software engineering, and this piece by Breunig is a valuable contribution to the conversation.

Encoding Team Standards

I have observed this pattern repeatedly. A senior engineer, when asking the AI to generate a new service, instinctively specifies: follow our functional style, use the existing error-handling middleware, place it in

lib/services/, make types explicit, use our logging utility rather thanconsole.log. When asking the AI to refactor, she specifies: preserve the public contract, avoid premature abstraction, keep functions small and single-purpose. When asking it to check security, she knows to specify: check for SQL injection, verify authorization on every endpoint, ensure secrets are not hardcoded.A less experienced developer, faced with the same tasks, asks the AI to “create a notification service” or “clean up this code” or “check if this is secure.” Same codebase. Same AI. Completely different quality gates, across every interaction, not just review.

This is a systems problem, not a skills problem. And it requires a systems solution.

Source: Encoding Team Standards, martinfowler.com

The style, conventions, and patterns of a codebase are things that developers who work on it slowly internalise through experience—through exposure to code, rarely from sitting down and reading documentation. And we know LLMs are really good at taking such documentation and incorporating it into the context of how they work. Here Rahul Garg proposes treating the instructions that govern AI interactions—generation, refactoring, security, review—as infrastructure: versioned, reviewed, and shared artefacts that encode tacit team knowledge into executable instructions, making quality consistent regardless of who is at the keyboard.

damn claude, that’s a lot of commits

The week of September 29 2025, there were 27.7 million public commits on GitHub. Claude Code accounted for 180,000 of them, about 0.7%. By the week of March 16 2026, total weekly commits had grown to 57.8 million (itself a 2.1x increase, likely driven in part by AI tooling), and Claude Code accounted for 2.6 million, or 4.5%. All AI coding tools combined now sit at roughly 5% of every public commit on GitHub. For context, GitHub’s Octoverse 2025 report recorded 986 million code pushes for the year, with monthly pushes topping 90 million by May 2025, and that trajectory hasn’t slowed down.

Claude Code went from 0.7% to 4.5% of all public GitHub commits in six months

Source: damn claude, that’s a lot of commits, AI Focus

Firstly, how did I not know that Semi Analysis had a podcast! Here Paul Kinlan takes a deeper look at the numbers associated with coding agent commits to public repositories on GitHub. There are certainly a bunch of caveats here, but still—the numbers are remarkable.

BUILDING WITH AI

Eight years of wanting, three months of building with AI

For eight years, I’ve wanted a high-quality set of devtools for working with SQLite. Given how important SQLite is to the industry, I’ve long been puzzled that no one has invested in building a really good developer experience for it.

A couple of weeks ago, after ~250 hours of effort over three months on evenings, weekends, and vacation days, I finally released syntaqlite (GitHub), fulfilling this long-held wish. And I believe the main reason this happened was because of AI coding agents.

Of course, there’s no shortage of posts claiming that AI one-shot their project or pushing back and declaring that AI is all slop. I’m going to take a very different approach and, instead, systematically break down my experience building syntaqlite with AI, both where it helped and where it was detrimental.

Source: Eight years of wanting, three months of building with AI, Lalit Maganti

Stories like this are valuable because, particularly for those who aren’t working extensively with AI for software development, it can be hard to even imagine the scale and capability of these systems now. If you’re still a little sceptical, or perhaps you use Copilot but not an agentic system, I highly recommend reading this article to get a sense of what a capable software engineer with these technologies is now able to do in a matter of weeks—even on a very complex project—and in far less time you can certainly build very sophisticated systems.

We Rewrote JSONata with AI in a Day, Saved $500K/Year

A few weeks ago, Cloudflare published “How we rebuilt Next.js with AI in one week.” One engineer and an AI model reimplemented the Next.js API surface on Vite. Cost about $1,100 in tokens.

The implementation details didn’t interest me that much (I don’t work on frontend frameworks), but the methodology did. They took the existing Next.js spec and test suite, then pointed AI at it and had it implement code until every test passed. Midway through reading, I realized we had the exact same problem – only in our case, it was with our JSON transformation pipeline.

Long story short, we took the same approach and ran with it. The result is gnata — a pure-Go implementation of JSONata 2.x. Seven hours, $400 in tokens, a 1,000x speedup on common expressions, and the start of a chain of optimizations that ended up saving us $500K/year.

Source: We Rewrote JSONata with AI in a Day, Saved $500K/Year, Reco

Stories like this are data people should take on board when thinking about how they work with AI technologies and the value of them. If you’re not working extensively with these technologies—and even if you are—it can be hard to fathom just how capable they are and what their implications might be.

I Built a Design Team Out of AI Agents

Then I decided to share some of the tools I’ve been using to learn about working with AI more deeply and to explore what it means for design as a practice. I made a set of design skills you can use with any model and a set of Inclusive design skills.

This has been pretty handy but next I wanted to explore how a group of design agents would perform and play around with that idea. There’s plenty of room for improvement here, but it has been a very fun experiment and I learned heaps.

Source: I Built a Design Team Out of AI Agents, MC Dean percolates

MC Dean shares a range of design skills she’s built for working with AI as a designer.

UNDERSTANDING AI SYSTEMS

Components of A Coding Agent

In this article, I want to cover the overall design of coding agents and agent harnesses: what they are, how they work, and how the different pieces fit together in practice. Readers of my Build a Large Language Model (From Scratch) and Build a Large Reasoning Model (From Scratch) books often ask about agents, so I thought it would be useful to write a reference I can point to.

More generally, agents have become an important topic because much of the recent progress in practical LLM systems is not just about better models, but about how we use them. In many real-world applications, the surrounding system, such as tool use, context management, and memory, plays as much of a role as the model itself. This also helps explain why systems like Claude Code or Codex can feel significantly more capable than the same models used in a plain chat interface.

Source: Components of A Coding Agent, Sebastian Raschka

Sebastian Raschka brought us the books Build a Large Language Model (From Scratch) and Build a Large Reasoning Model (From Scratch). Here he turns his attention to examining how coding agents and coding harnesses work.

Quantization from the ground up

Qwen-3-Coder-Next is an 80 billion parameter model 159.4GB in size. That’s roughly how much RAM you would need to run it, and that’s before thinking about long context windows. This is not considered a big model. Rumors have it that frontier models have over 1 trillion parameters, which would require at least 2TB of RAM. The last time I saw that much RAM in one machine was never.

But what if I told you we can make LLMs 4x smaller and 2x faster, enough to run very capable models on your laptop, all while losing only 5-10% accuracy.

Source: Quantization from the ground up, ngrok blog

Sam Rose writes incredible visual essays that explain complex concepts in very approachable ways—like this piece on prompt caching from late last year. He returns with a long and detailed explanation of quantization, the seemingly magical property of LLMs: by reducing the precision of the floating-point numbers which capture the weights of the model substantially, we don’t actually reduce the capability of the model much at all—which then allows us to run models with less memory and greater performance.

Great reading, every weekend.

We round up the best writing about the web and send it your way each Friday.